On September 27, we reached our second milestone and demonstrated the core abilities of the ILIAD system live to invited industry representatives at the National Centre for Food Manufacturing in Holbeach, UK.

In the set of demonstrations, we showcased the current implementation of core components for deployment and operation – from when the first ILIAD robot is unpacked at a new site, to fleet coordination and object detection.

The first action when deploying a self-driving warehouse robot should be to calibrate its sensors. The robots in ILIAD do not use any pre-installed guides or markers, but rather use on-board sensors to construct a map of the environment, which is used for localisation and planning. However, in order to do so, each robot must know precisely the position and orientation of its cameras and other sensors. If, for example, a sensor has been shifted from its factory-default mounting during transport, that would affect the reliability and precision of the robot’s operation. Therefore, the first task should be to carefully calibrate the sensors on the robot.

In ILIAD, we have implemented a self-calibration routine, which saves considerable time and work during deployment, compared to tedious procedure of standard calibration using custom-made calibration targets. A user only has to walk around with the robot for a few seconds (as seen on the right) while the calibration software is running, in order to precisely determine the positions of the sensors. The process is visualised in the video below, which shows a top-down view of the hall where the robot is first deployed. What we first see in this video the outline of the walls and floor, as seen by the laser range scanner on the robot. When the robot starts moving, without knowing where its sensors are, the room looks very blurry. At 16 seconds into the video, the algorithm finds the correct calibration of the sensor position, after which the image clears up again, even while the robot is still moving.

Once the calibration is complete, the next step is to construct a consistent map. Traditionally, truck localisation is achieved by first installing specific markers in the environment, then manually surveying them, after which they can be used as references to compute the relative position of the truck. In contrast, the robots in ILIAD are walked through the environment once, during which they record the shape of the environment, and accurately compensate for any drift that occurs while driving, and at the same time automatically removing moving obstacles from the map, so that only the stationary structures remain in the map.

Given an annotation of the map, assigning positions where each product is stored, the fleet is now ready for orders. As of now, the assignment of places is done manually, but automatic methods to assist in this process are planned for the end of the project.

Assuming that the fleet is connected to a warehouse management system that maintains orders, for each new order, tasks are assigned to the fleet. The video below shows a list of orders, for objects to be put on a pallet. Given information about the shapes and weights of each type of package, the system plans how each box should be stacked on the pallet.

Now that each robot knows in which order to put objects on the pallet, the system plans how the fleet of robots should move in order to fulfill the order. The video below shows an example with a fleet of two robots. Given the map created during the deployment phase, the robots plan and coordinate their paths on the fly, without the need to manually design paths or traffic rules. This on-line motion planning and coordination further cuts deployment effort of the system and adds to the flexibility, as it makes it possible for robots to replan – in case of unexpected obstacles, for example.

In this milestone demonstration, we also showed picking of multiple types of objects with a dual-arm manipulator. The arms are not currently integrated with the truck platforms. Instead, the picking was demonstrated via video link from the University of Pisa. Once a robot truck with arms reaches a picking location, this is how it will pick objects and place it on the pallet of the current order.

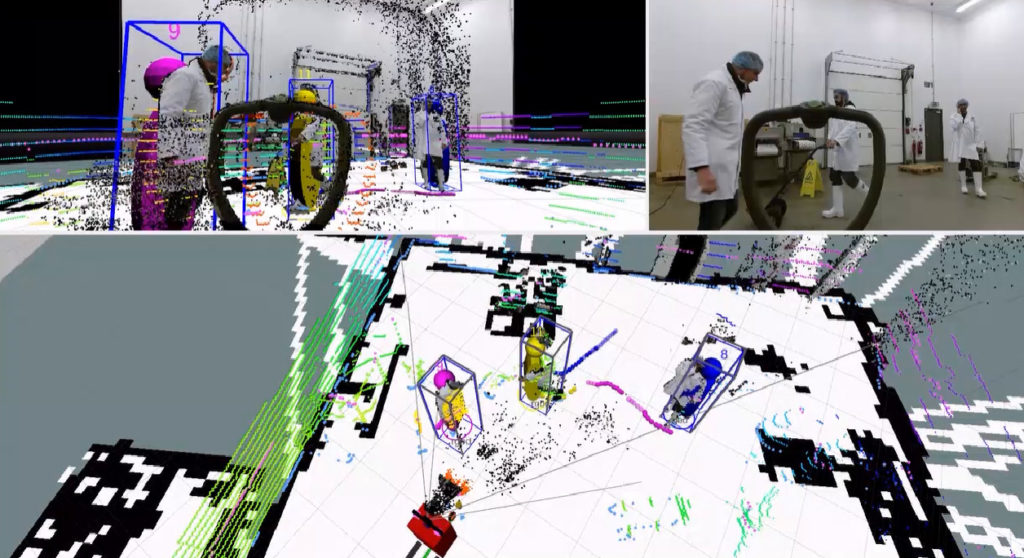

One of the key aspects of ILIAD (in addition to facilitating automatic deployment and adaptation to changing environments) is safe operation among people. One of the cornerstones of this capability is to reliably detect persons in the surroundings of the robot. We demonstrated people detection and tracking software.

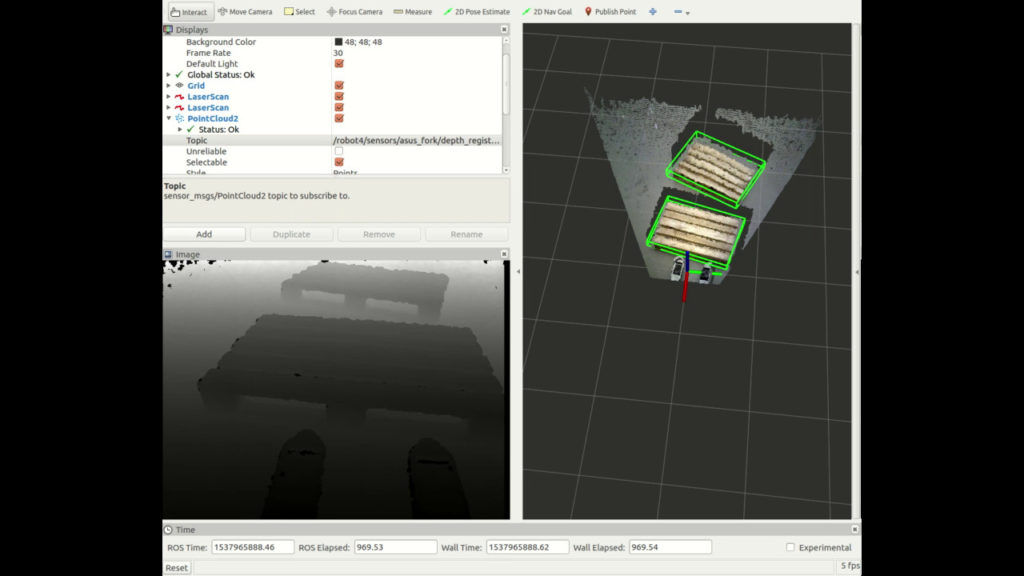

Finally, we demonstrated the present version of our object detection module, spefically its performance when it comes to detecting pallets.

In conclusion, this milestone demonstration showed the integration of functional prototypes of some of the most central capabilities of the ILIAD system. In October 2019, at the Milestone 3 demo, we will demonstrate the integration also of the more long-term aspects of ILIAD, this time at a real-world warehouse of food manufacturer Orkla Foods.