On October 16, 2019, we reached our third milestone at the second live physical ILIAD project demonstration. The demo coincided with the second stakeholder meeting with invited industry representatives, at Orkla Foods’ facilities in Örebro, Sweden.

Orkla Foods presented ILIAD and its uses from an end-user perspective, and industrial partners Kollmorgen Automation and Logistic Engineering Services placed the project in the context of current industry developments worldwide. After the general overview, the participants were given a live demonstration of the capabilities of the ILIAD robot fleet, inside the ambient-temperature warehouse at Orkla Foods, as detailed in the next section. After the demo, the stakeholder meeting concluded with coffee and chatting with the guests and the developers, with snacks from the factory.

In the set of demonstrations, we showcased the current use of core components for deployment and operation—from when the first ILIAD robot is unpacked at a new site, to fleet coordination, navigation and people awareness, and object picking and unwrapping.

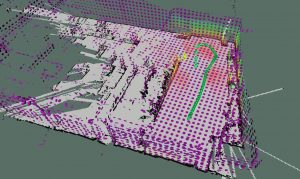

We demonstrated 3D mapping by driving one of the trucks equipped with a 3D lidar. Moving obstacles are automatically filtered from the map, and a 2D obstacle grid map is extracted from the fully-3D map to be used for further navigation and motion planning.

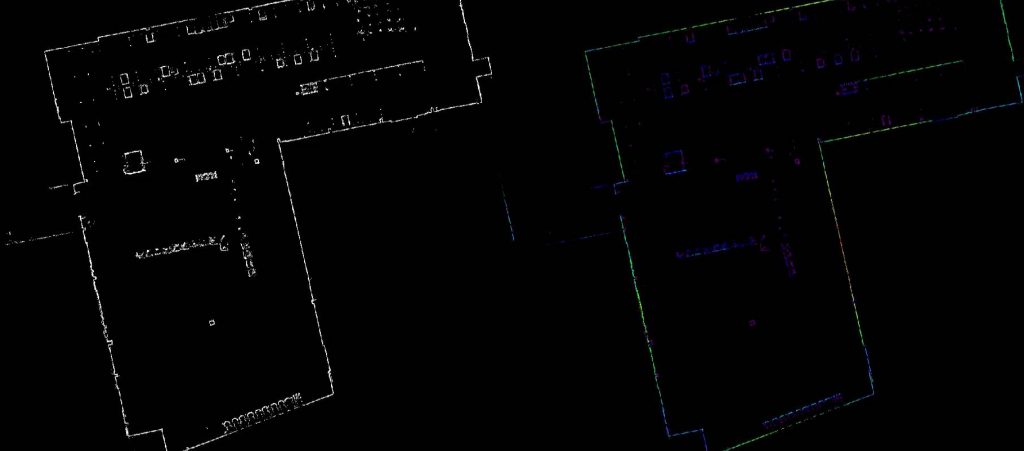

A novel addition, first demonstrated at MS3, was the map scoring tool, which aims at automatically highlighting problem areas of the map, if any—such as clutter, or registration errors. One use case for this method is to assist a deployment engineer checking to verify if the map constructed in the previous stage is consistent and useful. In the picture below is a complete map of the warehouse. To the left is the original map, and to the right is the output of the automatic map scoring tool, which scores structural elements highly (green) and gives low score to clutter and potential mapping errors (such as pallets that should not be part of the map, or an outside loading pay that was only partially observed). Click to zoom in on the image.

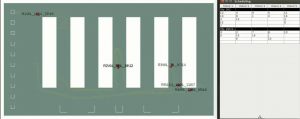

Similar to MS2, we demonstrated automated task planning when a simulated order is received. Given a product list for the order, the OPT Loading software provided by ACT Operations Research plans how each object should be stacked on each pallet for efficiency and stability. The fleet management layer then sends tasks to the robots in the fleet, going to pick pallets and products in order, to fill the pallet as planned.

A new addition that was shown at MS3, over what was shown already at MS2, was dynamic task re-allocation on the fly, as requirements change. This can happen as unexpected delays or faults are encountered, so that the tasks of the fleet must be rescheduled or shifted to other robots. This part was shown in simulation, in order to better demonstrate the capabilities in an environment with more robots, and a larger warehouse, than what was physically available at the demo site. In the pictures below, you see pallet planning, given a set of products to go in an order. To the right are two tables with task schedules, the second created automatically after an unexpected delay of one of the trucks.

We demonstrated that the trucks can plan paths in the environment and coordinate their motion so that they automatically give way in sections where their paths overlap spatially.

New to MS3 was that we demonstrated the integration of flow-aware mapping, motion planning, and coordination. We also demonstrated coordination of two different truck platforms: one large Toyota BT SAE200, and one small Linde CiTi truck. The fleet can learn statistical human motion patterns from observation while operating, given input from the human tracking module.

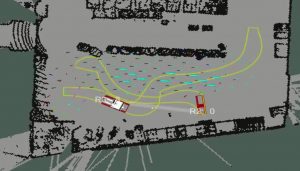

The arrows in the map in the picture below indicate statistical flow patterns that have been learned from observations using sensors mounted on one of the trucks. The yellow path envelopes show the planned paths of the two robots R2 and R3 (including the area they will sweep while turning). R3 plans to

The arrows in the map in the picture below indicate statistical flow patterns that have been learned from observations using sensors mounted on one of the trucks. The yellow path envelopes show the planned paths of the two robots R2 and R3 (including the area they will sweep while turning). R3 plans to

go from left to right in this image, and R2 from right to left. Both robots plan paths that, to the extent possible, avoids the expected flow of people as well collisions with other robots and obstacles. The arrow pointing from R2 to R3 indicates that R2 will not enter the region where the paths intersect until R3 has passed. These constraints are computed on the fly by the coordinator module.

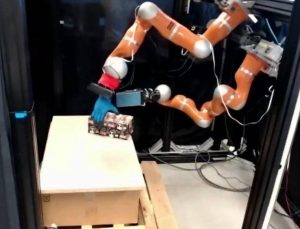

The manipulation demonstration was shown via video link from Pisa, as the arms will not be physically mounted on a robot for fully integrated manipulation until the final Milestone 4 demo. The two main additions to the MS3 demo over last year’s demo was (1) more advanced plastic cutting, also taking into account objects whose position is not exactly known in advance, and objects with uneven shapes such as the picture below, and (2) integrating ORU’s object detection module with the manipulation planning and execution from UNIPI. Also demonstrated via live video link, from the Technical University of Munich, was the “safe motion unit” (SMU) which enforces biomechanically safe motion of the robot arm in the vicinity of people. Also here, a new addition compared to the MS2 demo was integration of computer vision so that the SMU is activated automatically when a human enters the robot arm’s reachable space.

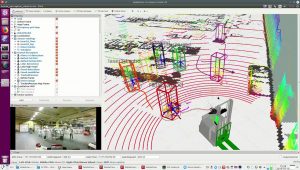

In the demonstration of the people detection and tracking stack used and developed in ILIAD, we now showed full integration of all detectors: including 2D and 3D laser, RGB-D camera, and the Retenua “emitrace” camera for reflex vest detection. The picture below shows an excerpt of this demo, also showing the complementariness of the sensor modalities. In this particular example, the emitrace reflex camera is the only one that detects the lying person; the RGB-D and 3D laser, but not the emitrace, detect the persons without safety vests in front of the robot; and only the 3D laser detects far away persons outside of the field of view of the cameras.

Finally, we demonstrated mutual communication of intents via, respectively, eye-tracking glasses on a person and a projector on one of the robots. One of the robots is equipped with a projector that displays an arrow on the floor in front of it, indicating its current movement/turning direction. The integration with the eye-tracking glasses is implemented such that when the person is not looking at the robot, the arrow is blinking in order to get the attention of the person; and when the person is observing the robot, the arrow is displayed statically.

Finally, we demonstrated mutual communication of intents via, respectively, eye-tracking glasses on a person and a projector on one of the robots. One of the robots is equipped with a projector that displays an arrow on the floor in front of it, indicating its current movement/turning direction. The integration with the eye-tracking glasses is implemented such that when the person is not looking at the robot, the arrow is blinking in order to get the attention of the person; and when the person is observing the robot, the arrow is displayed statically.

In conclusion, this milestone demonstration showed the most important capabilities of the ILIAD system, most of which were integrated on the same physical fleet, running live at the end-user site; while the manipulation components were demonstrated via live demonstrations in separate locations. At the final Milestone 4 demo, we will demonstrate full integration also of manipulation with the rest of the system, and final version of the remaining components.