As of June 2021, the ILIAD project is now over. It has been quite a journey, and we are very happy with the scientific contributions we have made to navigation, perception, planning, coordination, and manipulation for robots.

Notable outcomes include new methods for robust and reliability-aware mapping and localisation for self-driving robots, long-term learning and environment adaptation by learning human-aware flow maps, pushing the state of the art in reliable people detection for safety under difficult conditions, biomechanically safe robot motions and safe & intuitive human-robot interaction, automatic fleet coordination and motion planning, as well as flexible object picking and placing. All in all, 119 scientific publications have come out of the ILIAD project, as well as several patents and startup companies.

Final demo

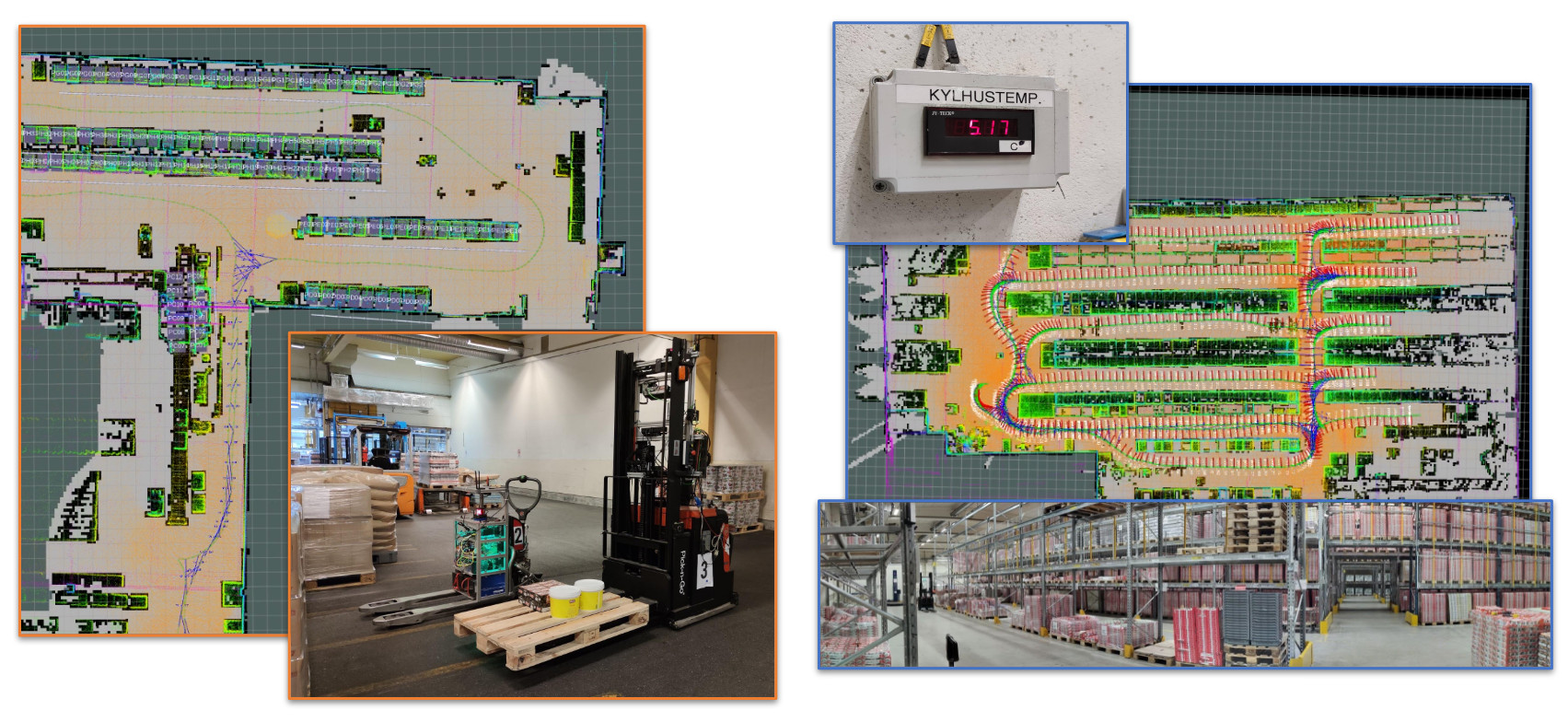

The final system demonstrations were carried out live, during day-time operations, in two different warehouses at Orkla Foods in Örebro, Sweden. Although the Covid-19 pandemic certainly made it difficult to fully integrate all the technologies developed by the partners in the ILIAD consortium, we are happy that we still were able to show how much of it can be used for easy deployment in a live environment, and safe operation together with people.

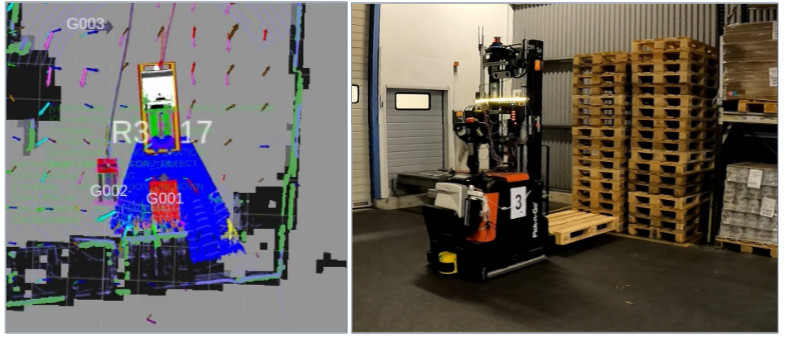

In a nutshell, in the final set of demonstrations we have shown deployment of a fleet of autonomous forklift trucks in two warehouses, where the time from powering on the computer to the start of the first mission is less than an hour.

Our deployment procedure includes the tasks of sensor calibration, mapping and map verification, semantic mapping, and map cleaning. The result is a 3D map of the warehouse, with all the shelves marked up, ready to be used for operation.

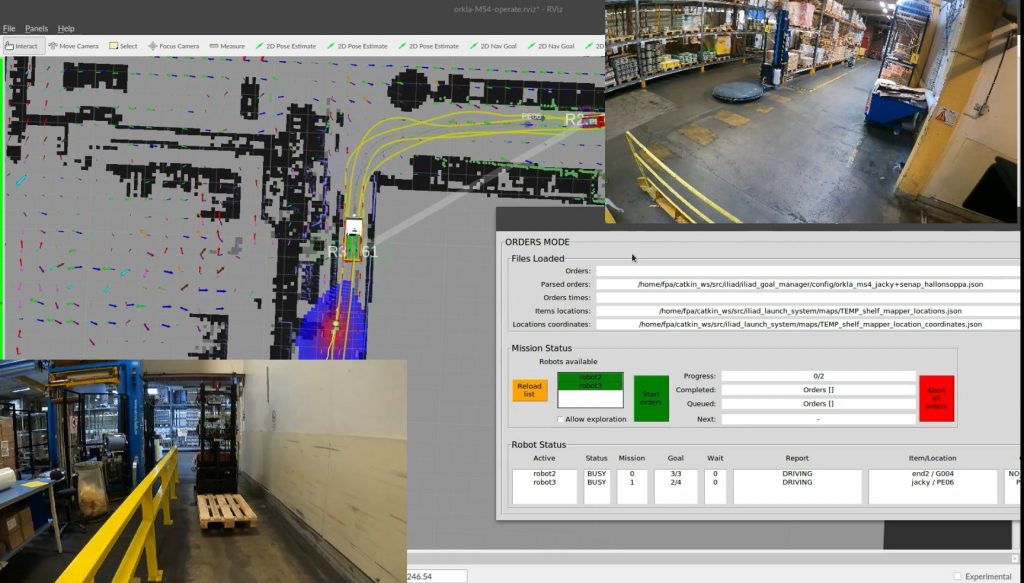

After this initial deployment, the ILIAD system performs fleet management and task assignment to pick up goods in the right order and load them on pallets, and the trucks autonomously plan their paths while also taking human motion patterns into account, track and avoid nearby people, coordinate motion between themselves; and the ILIAD manipulation system is able to autonomously unwrap objects and pick & place them on pallets.

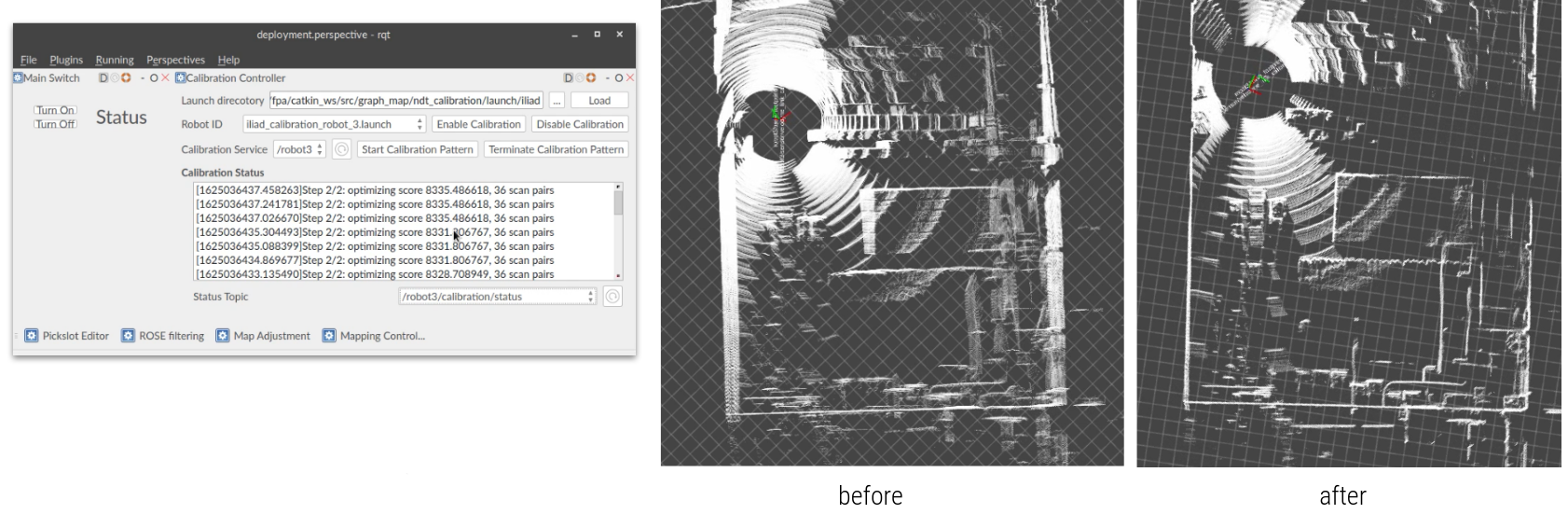

Sensor calibration

Once a new truck enters the warehouse, we run automatic sensor calibration. With a push of a button, and driving the truck in a small loop a few times, the position of the truck’s range sensors is automatically calibrated to ensure that the perception of walls and people around the robot is precise. The effect can be seen in the example below, where the room looks blurry before calibration, and much sharper when calibration is complete.

Mapping

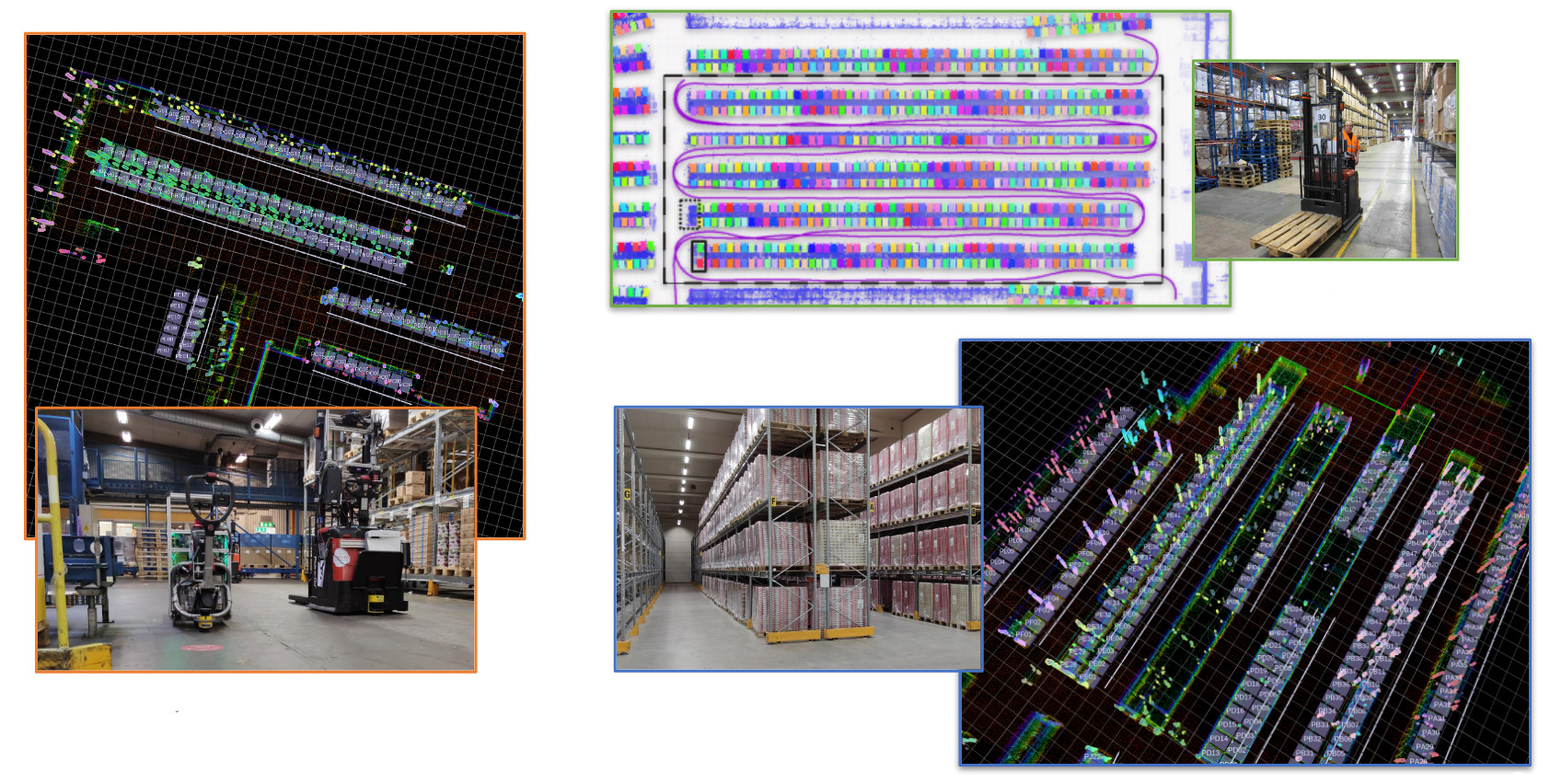

With sensors calibrated, one of the robots, which has a 3D laser range finder, is driven around the warehouse to construct a map. We have implemented a new verification procedure that can automatically check the map for misalignments and make corrections. We also include a tool for automatically separating “structure” from “clutter”, which makes it easier to keep only the static structure in the map and clean it of stray objects that would otherwise be recorded as part of the environment. The resulting 3D map can be used also by other types of robots, with less costly 2D sensors, for navigation and localisation. We have also developed methods for aligning maps made with different sensors, so that robots of different makes can still easily cooperate and share the same environment.

For a warehouse robot, it is not enough to have a map of walls and floor alone. It must also know where all the shelves are, so that it can collect orders from the right places. The demonstration also includes a method that analyses the 3D map and labels all shelves, saving a lot of tedious labour that would otherwise go into manually annotating the map with all the shelf positions.

At this point, the fleet of robots is ready for orders. The whole procedure takes less than an hour, saving weeks of work that would normally be required for designing map layouts and paths, calibration and tuning.

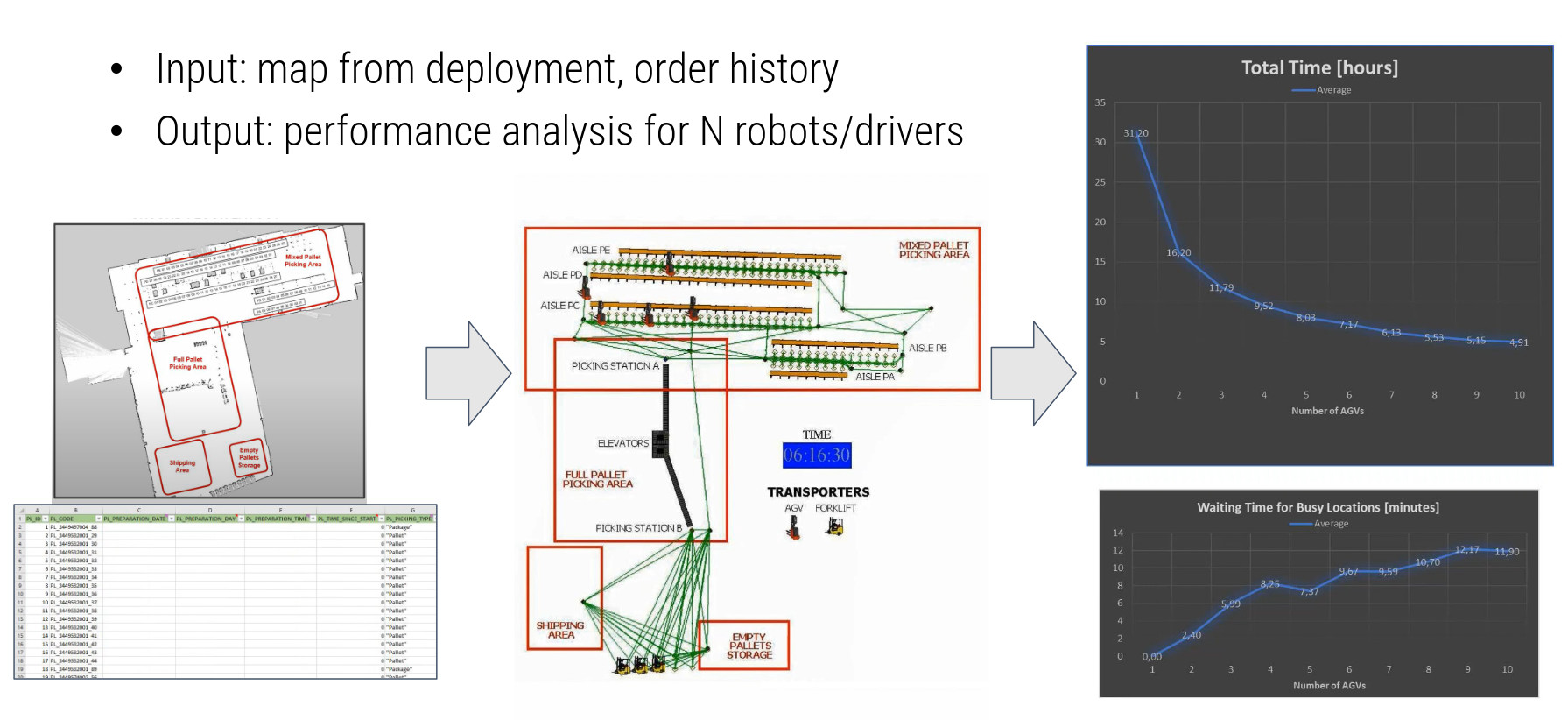

Simulation for optimising operations

Once the automatically annotated and verified 2D and 3D maps are available, the end user will likely want to make an informed decision of the number and types of robots to deploy in the warehouse. Running a stochastic discrete event simulator, which we have improved and extended during ILIAD, we can simulate outcomes with different numbers of self-driving and manually operated trucks, using site-specific map data and real orders.

Pallet planning and picking

When a new order comes in via the warehouse management system, mixed-pallet orders are first processed by the OPT Loading software, which plans how each object should be stacked on each pallet for efficiency and stability. The fleet management layer then sends tasks to the robots in the fleet, going to pick pallets and products in order, to fill the pallet as planned.

Once tasks have been assigned by the ILIAD goal manager, so that each robot knows what to pick in which order, the robots first go to pick up a pallet. The position and orientation of pallets is roughly known, but the robots use a fork-facing camera to detect exactly where the pallet is before picking it up. Pallets may not be placed exactly where expected, especially in warehouses that are shared with humans.

Motion planning

The robots perform on-the-fly motion planning to compute how to drive to each of their assigned goals. The fleet needs to be flexible to changes in the environment, both because shelves and other objects might be rearranged, and because the warehouse is naturally populated by moving people and other trucks. We have implemented an ensemble of planners that address the challenging cases that often occur in warehouse logistics: long narrow passages, natural and safe motion around people, and planning long paths in a short amount of time. Another noteworthy contribution is a new benchmark for evaluating and comparing motion planning algorithms.

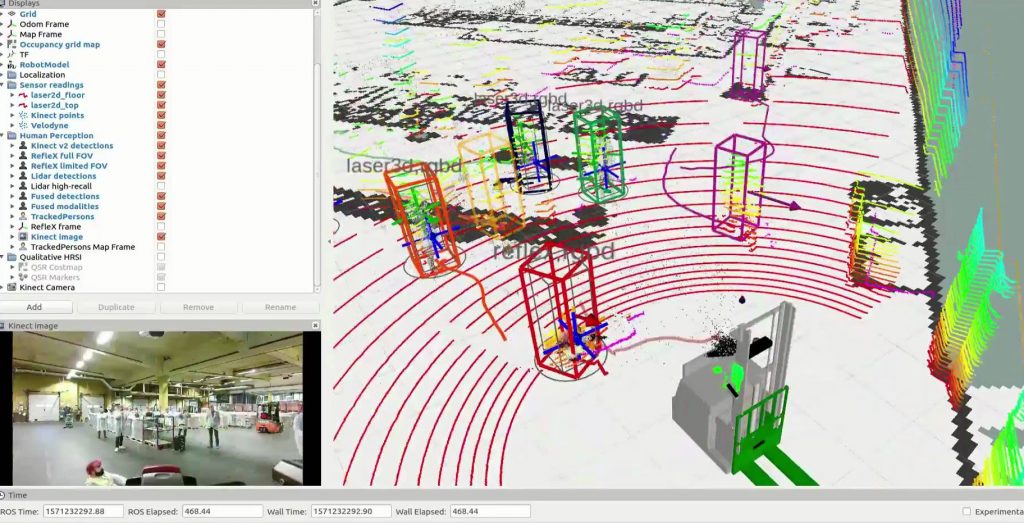

Safe human-aware navigation

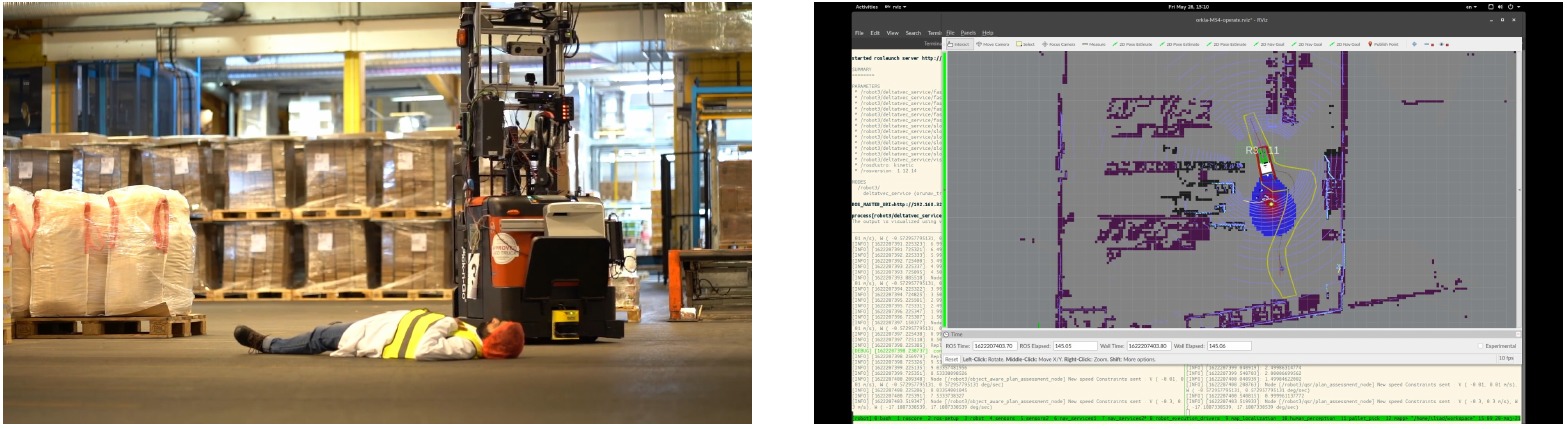

The self-driving truck fleet shares the space with humans and therefore detecting and safely adapting to people is imperative. Our people detection and tracking pipeline combines several types of sensors: 2D and 3D laser scanning, colour and depth camera data, as well as a specifically developed camera for detecting safety vests. Several state-of-the-art detection approaches that process this sensor data have been developed during the project, and are running in real-time onboard each robot.

The robots also adapt their speeds using constraints computed from a “qualitative trajectory calculus” that estimates the “social cost” of different kinds of motion as well as a “vehicle safe motion unit” that ensures injury-free velocities computed using the shape and mass of the robot, and specific body parts. The image below shows a particular corner case, where a person has “fainted” in the robot’s path. Detecting lying people is often challenging for laser- and camera-based detectors, but with our people detection and human-aware navigation systems, we still detect it as a person (and not just any obstacle) and safely navigate around it.

Coordination and fleet management

In addition, the robots need to coordinate between themselves who goes first. This is also a challenging problem in dynamic environments with many robots. Not least in warehouse-like environments with many aisles, robots may be blocked or end up in a deadlock, where robot A waits for robot B, which waits for robot C, etc. In ILIAD, we have developed algorithms that are guaranteed to prevent and recover from deadlocks, should they happen, and also how to safely perform online, robust and flexible coordination in dynamic environments, even when the robots’ wifi connection is unreliable. The image below shows an example from the live demonstration, where one robot waits for another, based on constraints that are computed in space and time, knowing where the robots may be at any point in time.

Object picking

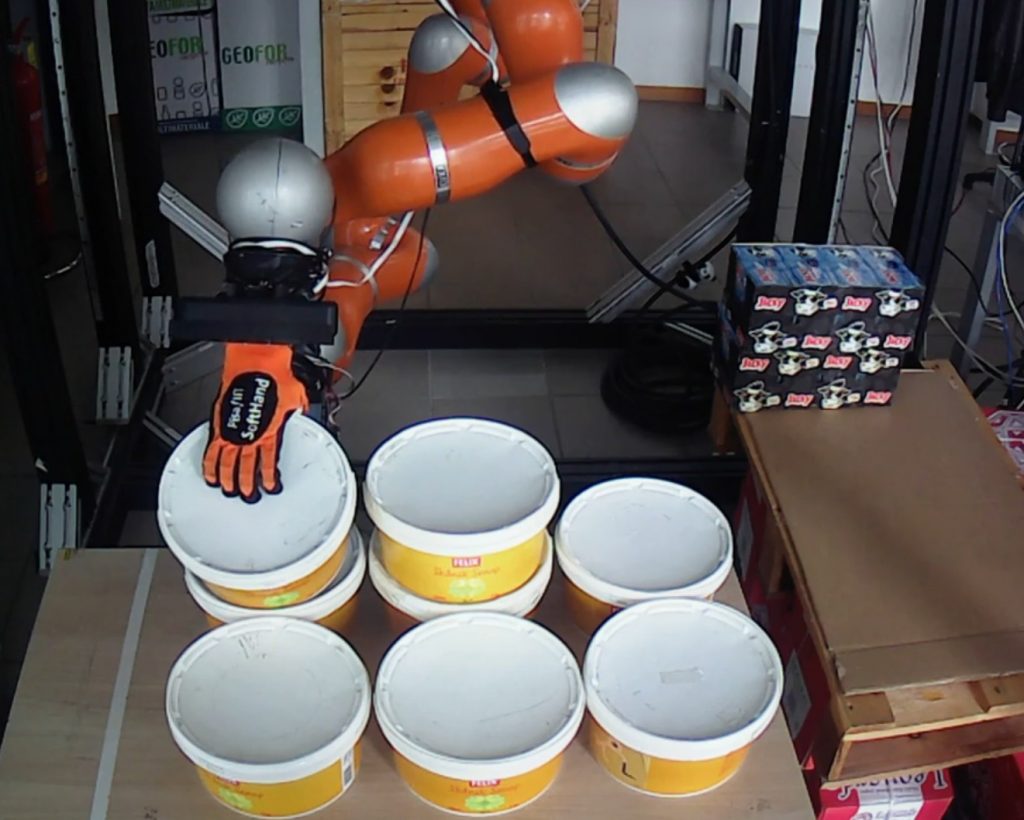

Once a truck reaches a shelf, it needs to pick objects and place them on a pallet. This is, after all, one of the core tasks for a warehouse robot. In ILIAD, we have worked on perception for manipulation (detecting where each object is on a pallet, as well as detecting whether or not a pallet is wrapped in plastic) as well as automatic cutting of plastic and picking & placing of objects.

New pallets that enter the warehouse are wrapped in plastic, which needs to be cut before objects can be picked. We have developed an unwrapping system that comprises a novel cutting blade as well as algorithms for compliant control, in order to perform the delicate manoeuvre of cutting plastic wrap around odd-sized stacks of objects without damaging the objects.

One central part of the ILIAD project is flexible picking & placing (object manipulation). In order to be truly useful, these warehouse robots should be able to pick a large variety of objects without having to change tools. Some of the challenges are that objects come in very different shapes and sizes, and often cannot be picked with a vacuum gripper from the top, which is otherwise a common strategy. Instead, what we have developed as part of ILIAD is a two-arm system, with one five-fingered hand and one tray-like “hand”, which is able to pick much like a person would. In this way, we can pick open-top boxes, buckets, and cans with the same system.

Long-term adaptation

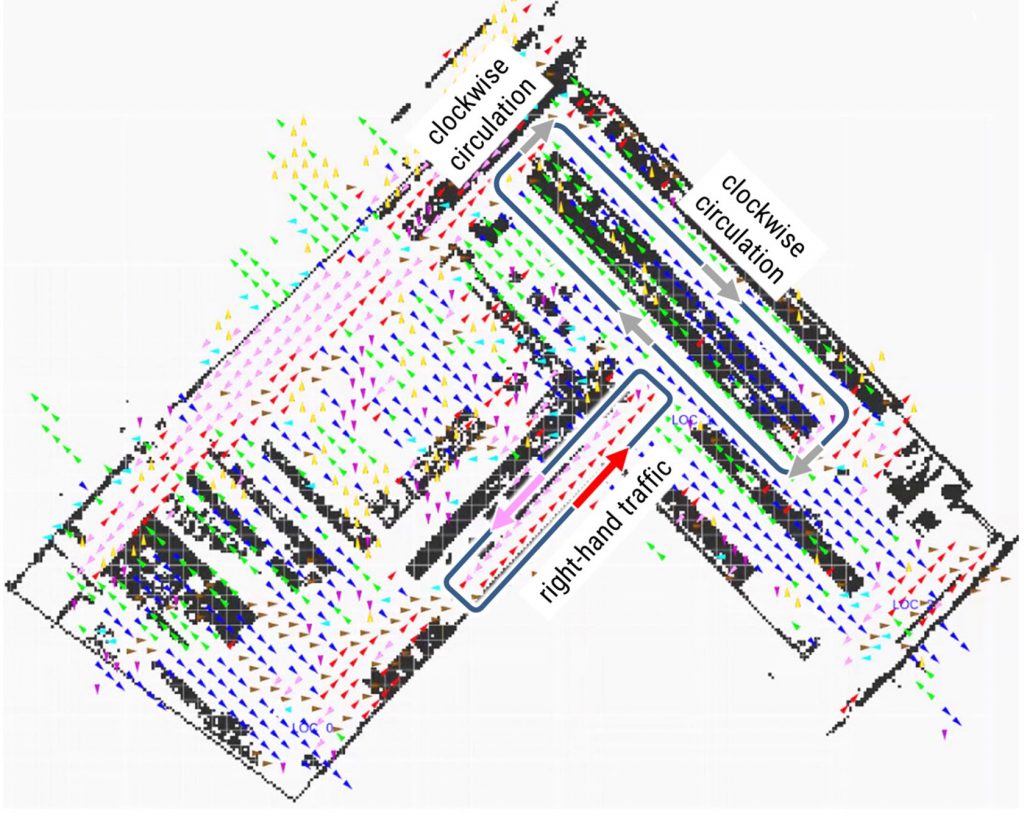

While going about their daily tasks, the robots also explore to learn about site-specific activity patterns. These patterns, or “maps of dynamics”, can then be used by any robot in the fleet for planning paths that are better aligned with how people usually move in the warehouse. This is important both for safety and efficiency, so that the robots don’t try to go against the normal flow, or where a lot of people are expected be. The way in which the robots learn such implicit “traffic rules” themselves, rather than trying to manually set up compliant rules and routes, is quite novel to ILIAD and aims to make the fleet self-improving over time.

Conclusion

In conclusion, ILIAD has made many scientific contributions that will contribute to making highly flexible fleets of robots that operate in warehouse logistics applications in environments shared with humans possible. Methods for quick and easy deployment will be useful for enabling a transition to automation, so that also smaller companies can include self-driving robots in their warehouse fleets, without having to modify their site or spend a lot of time and money on deployment projects. Highly reliable people detection and algorithms for safe navigation around people will make it possible to drive both safely and efficiently in shared spaces.

We already see the first results from our exploitation efforts: ILIAD results are already incorporated in two Bosch products (market entry in 2020 and 2022), and more are likely to follow. ILIAD has also contributed to a number of new research and innovation projects that will continue different aspects of the research in ILIAD. So even if ILIAD is now over, the work we have done will live on for a long time to come!